Audrey Woods, MIT CSAIL Alliances | March 9, 2026

Imagine saying "I want a bookshelf" and watching a robot build one in minutes. Not only that, imagine being able to ask the robot to edit the shelf in real-time, or even make the bookshelf into something completely different, like a chair or a table.

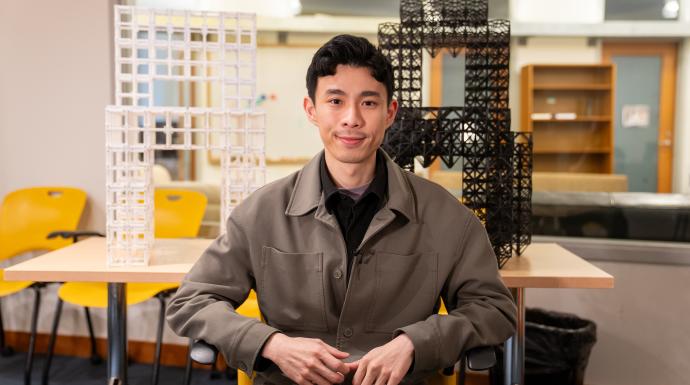

This kind of editable, on-demand reality is the vision guiding the research of MIT CSAIL graduate student Alexander Htet Kyaw, who is working toward a future in which the physical world is as fluid and responsive as the digital one.

FROM MYANMAR TO MIT: DESIGN AS A GATEWAY TO TECHNOLOGY

Kyaw's path to computer science began with architecture. As an undergraduate at Cornell University—where he studied architecture and computer science—he encountered digital fabrication tools for the first time and was enthralled. "I originally grew up in Myanmar and I didn't have access to these tools. When I came to the US, I suddenly had access to all these tools." Working with technology, Kyaw began to wonder: how do you actually collaborate with it to create something novel, even beautiful?

This led him to pursue augmented reality and robot fabrication, which also led him to MIT. “I knew that I wanted to do something that's in the intersection of design and technology, and MIT has both of them.” Collaborating with researchers in both the Media Lab and CSAIL, Kyaw now works primarily with Professor Randall Davis in CSAIL's Multimodal Understanding Group. "I am generally interested in how humans, machines, and the physical world around us work together—how we collaborate within physical space and how we make things."

FROM WORDS TO PHYSICAL OBJECTS

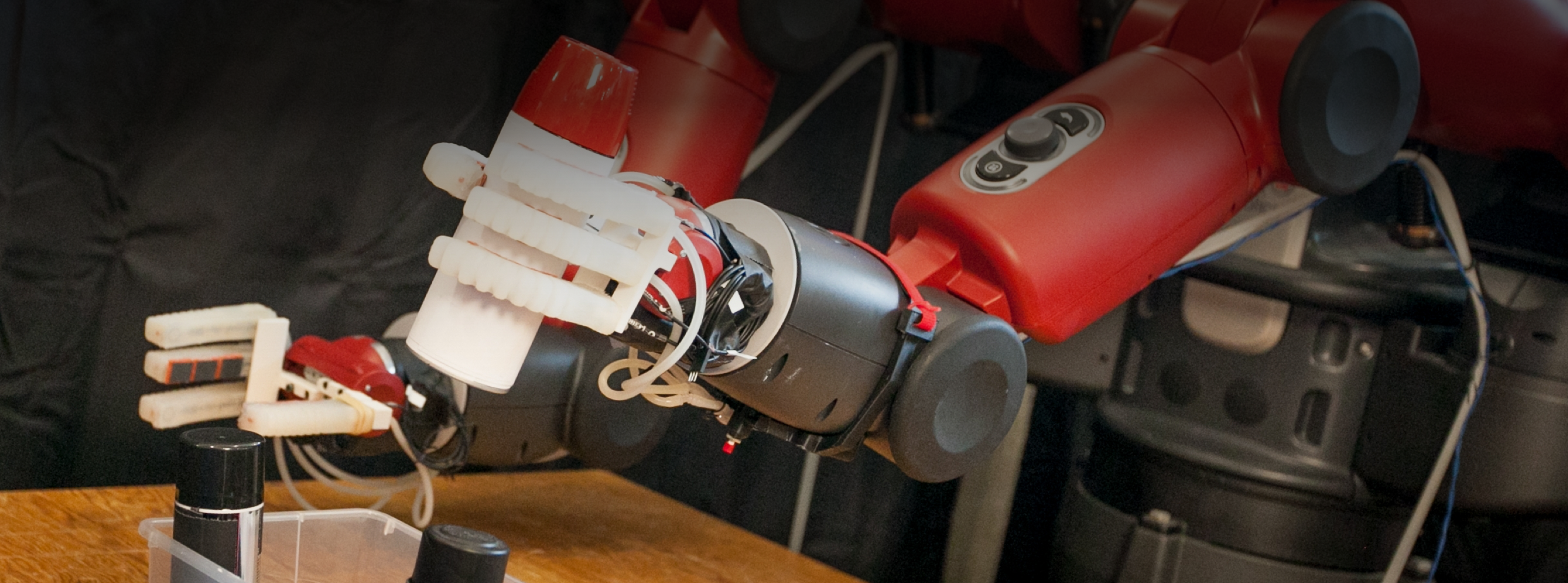

One of the first projects Kyaw worked on at MIT was Speech to Reality, a system which automates the pipeline from spoken request to assembled object. This work chains several AI technologies in sequence: a Large Language Model (LLM) parses the speech and identifies the target object, a 3D generative AI model produces a mesh from that description, and a custom discretization algorithm converts the mesh into a component-level representation suitable for robotic assembly, automatically checking for physical constraints—overhangs, connectivity, component count—before any piece is moved. The result is a complete object assembled in minutes.

Soon after, Kyaw published work which took the idea one step further: helping the system understand the function of an object. This project, which incorporates Vision Language Models (VLMs) into the pipeline, enables an assembly plan that accounts for how an object will actually be used, not just how it appears. Kyaw explains, "different components have different functionalities—structural components for the legs of a chair, surface components for the seat so that people can sit on it, etc.. [This system] is trying to think about not just the geometry, but the functionality of the object."

A key differentiator of Kyaw’s work is the use of modular, reusable components rather than 3D printing. "There's this idea of abundance to generative AI, where you can just keep generating digital objects over and over again. But if you do that in the physical world, that takes a lot of resources, a lot of time, and it would be pretty bad for the environment." Kyaw wants to “leverage generative AI but also be aware of sustainability and reuse, and incorporate it into the workflow itself, not just as an afterthought.”

INDUSTRY APPLICATIONS: FROM THE FACTORY FLOOR TO THE SMART HOME

Kyaw describes these projects as "rapid prototyping tools to create something big.” In other words, a way for engineers or research teams to generate and physically evaluate physical forms without wasting precious materials, resources, and time. "We think in a very spatial manner, in 3D. It's just easier to see the 3D object out of the screen and then determine if you like it or not." For industries where physical form matters—product design, consumer goods, architectural components, medical devices—a conversational, robot-assisted iteration loop in real-world space could surface insights that purely digital workflows miss entirely.

Another potential application is residential. "There could be a robot in every person's house making furniture on demand as they need it. In the morning you need a bed and then that bed gets transformed into a table or a chair." This idea is an extension of his Curator AI project, which took first prize at the MIT AI Conference's AI Build hackathon. Curator AI applies Kyaw’s same focus on 3D physical thinking to furniture retail, using Augmented Reality (AR) to scan a room and a VLM to recommend products matched to both the user's spoken description and the room's actual visual context.

Building on his previous research integrating hand tracking and augmented reality into design‑fabrication processes, he is now working on a system that lets users define what they want through both speech and gesture input (i.e. “I want a chair this tall and this long”), and a robotic system would construct the user’s request in the room where it will be used. Getting that level of human control and individual customization is exactly what he is working on now.

LOOKING AHEAD: CO-CREATION AND MORE

Kyaw's next research direction is physical co-design—iterating on a real object, in real space, in direct collaboration with a robot. "A lot of the time, when you design something, you design it in the digital world because it's easy to edit and move things around," he says. "But I'm arguing that because you can create physical objects in a very fast manner, maybe you can also design things after seeing them in real life. You can co-design with the robot, co-create something together. And then you're designing in the physical world."

Fundamentally, Kyaw believes that “our reality should be fluid and on-demand. I like the idea of saying what you want and getting something in real life, immediately. That’s the core motivation of my work.” For companies thinking about the future of fabrication, smart environments, or human-AI collaboration, that’s a future worth paying attention to.

Learn more about Alexander Htet Kyaw on his CSAIL page, his website, or his profile in MIT News.