Audrey Woods, MIT CSAIL Alliances | April 6, 2026

In his provocative recent article The flavor of the bitter lesson for computer vision, MIT CSAIL Assistant Professor Vincent Sitzmann wrote, “I believe that computer vision as we know it is about to go away.” This, he explains, is because of world models.

“We continue to fine-tune models for specific tasks like point tracking, segmentation, or 3D reconstruction—even as world models emerge, skirt all conventional intermediate representations, and directly solve a problem dramatically more general than everything our community has tackled in the past.” Just like how the “LLM moment” revealed the radical potential of language modeling, Professor Sitzmann thinks a similar moment is coming for embodied intelligence, where an AI system will not need an array of distinct tools to perceive and act but a singular, integrated model with full understanding of physics and reality. “The decades-out goal is that we could load a pretrained AI onto a completely new robot—one it has never seen before—and it could explore its environment and figure out how both the world and its own body work.”

As leader of the Scene Representation Group at MIT CSAIL, Professor Sitzmann is working to create exactly such models.

VIDEO GENERATIVE MODELS: A PROMISING START

When LLMs first splashed onto the scene, there was initial hope they would be the solution to embodied intelligence and robotics. “There were early attempts at fine-tuning LLMs to do stuff, and they kind of worked, but not really. I think fundamentally the physical world works differently than language because it has continuous data—video and actions—rather than discrete text tokens. Language is the wrong language for embodied intelligence.”

Things have changed with the development of video generative models, which offer an exciting new avenue for training embodied intelligence and robotics. The question is how. Because video is exponentially more complex and high-dimensional than language, “if you just ask a transformer to predict the next pixels [instead of words], it doesn’t work—it quickly devolves into images that just look like noise.” To address this, his group created Diffusion Forcing, a new training paradigm which combines the strength of full-sequence diffusion models (like Sora) and next-token models (like LLMs), allowing the model to keep generating frames the same way a language model keeps generating words in a stable—and therefore useful—way. This project was the basis of a viral online video game developed by an unaffiliated startup named Decart AI Oasis, where users can play Minecraft in a video model. “No one wrote a single line of code for this world. You give it a single frame and then it keeps hallucinating what would happen next.”

The “logical conclusion” of this work is Google Genie, a recently released foundation model for creating and exploring worlds. Given only an image or language prompt, Google Genie can generate an entire “world” in pixels without any 3D modeling or assets, including an interactive character for the user to control. There are obvious uses for entertainment—graphics, video games, movies—but the “real reason why Google and the others in my lab are pouring so much time and effort and money into this is because of the robotics applications.”

ROBOTICS AND EMBODIED INTELLIGENCE: A UNIVERSAL SIMULATOR

Think about reaching for a glass of water. We generally aren’t aware of it, but one critical step is for our brain to imagine, or simulate, the movement in the space around us, judging distances, forces, objects, and locations. Using these video generative models, theoretically a robot could generate a simulation of an action based on real-world understanding and simple image data and then act upon that simulation.

However, Professor Sitzmann explains, there are several key hurdles before we get there. “At the end of the day, the model just outputs pixels, and pixels don't control a robot. How do you use this for controlling a robot and for deciding what to do? That's essentially the cutting edge of questions right now.”

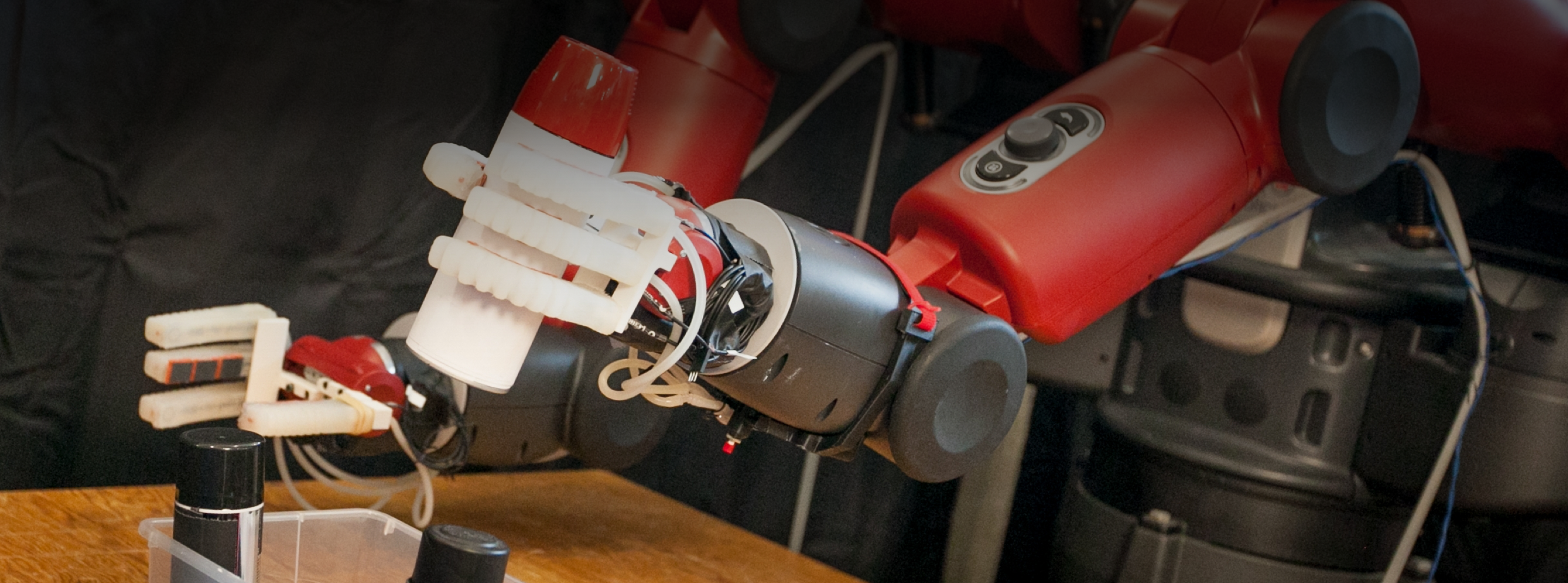

One option is another project out of Professor Sitzmann’s group they call a Large Video Planner, which uses a video model to “hallucinate what it would look like to solve the problem.” For example, they give the model the first frame of a scene, like a desk, and ask the model to pick up a mug on the desk. It then generates a video of someone picking up the mug, and “you can kind of treat that as a plan.” This project explored ways to take that plan and map it onto a robot, tracking the simulated human hand, copying the motions, and following the same trajectory. But Professor Sitzmann acknowledges this is just the first step toward a more robust solution.

"Writing actual physics simulators is incredibly complicated and realistically, it just doesn't work," Sitzmann says. "This is the first time we have a glimpse of a universal simulator that understands how physics works just by looking at data." This is also one of the “key motivations for building humanoid robots,” because these world models are—so far—trained to understand human movement and action.

FUTURE WORK: THE GOLDFISH PROBLEM AND INTRINSIC REWARDS

As promising as video generators are, Professor Sitzmann is clear-eyed about the hurdles ahead. One of the most significant challenges is what he calls the "goldfish problem." Currently, these models have very short memories. In the Minecraft example, a player could look at a mountain, turn around, and when they turned back, the mountain might have vanished or changed shape. “The model is like a goldfish. It completely forgets what was happening if it isn't right in front of it." Solving this will require new architectures that allow AI models to maintain a consistent internal map of a world that exists even when the camera isn't pointed at it.

He is also leveraging training methods like reinforcement learning to find a way for robots to learn from interaction and tactile experience. It’s currently difficult to “reward” a robot for physical learning the way a system can learn to win at Chess. Professor Sitzmann and his group are investigating intrinsic rewards, or finding ways to design a machine which would “explore the world independently. A toddler does things out of curiosity, but we have no equivalent of that in robotics.”

Professor Sitzmann has clear advice for business leaders. “If I were in industry right now, I would assume the way robots work today is going to change dramatically over the next decade.” Similar to how LLMs are rapidly reshaping the very nature of work, he foresees a “huge shift” in robotics and automation, and he urges people to think about the positive implications as well as the challenges. “There are obviously social questions and major negatives, but it’s also empowering, because the user is in control. Extrapolate this to, for instance, care for the elderly. Some people say it's going to be a problem to have a robot caring for you, that you’re lacking human contact. On the flip side, I think it is incredibly empowering in the same way a dishwasher is empowering. You control the device, so it's you helping yourself.”

If the last decade was about teaching machines to read and write, the next may be about teaching them to understand reality itself, with Professor Sitzmann leading the change.

Learn more about Professor Sitzmann on his website, his group’s website, or his CSAIL page.