WRITTEN BY: Matt Busekroos

Originally from Taiwan, Yen-Ling Kuo has always been interested in building intelligent systems to help humans solve problems. Before coming to MIT, Kuo was a software engineer at Google Mountain View working on visual search for shopping and new shopping advertisement features. She received her M.S. and B.S. in Computer Science and Information Engineering at National Taiwan University, where she completed her master thesis on crowdsourcing and reasoning of commonsense knowledge in the Intelligent Agents Lab.

Kuo’s experience conducting research began with crowdsourcing commonsense knowledge to make intelligent systems more robust. This afforded her the ability to contribute to ConceptNet, a commonsense semantic network and toolkit developed at the MIT Media Lab, and become a visiting student with the Software Agents Group at the Media Lab.

This visiting student experience showed Kuo various aspects of AI research and she was drawn to these research ideas, but at that time, Kuo accepted Google’s offer as a software engineer and decided to take a look at how the industry approaches AI problems.

Kuo’s projects at Google all involve using both text and image information to provide a better search experience in online shopping. From those projects, she learned that knowledge and information from different modalities can complement each other.

“For example, different merchants may use different names to describe the same color, so images are useful here; similarly, items may be occluded in the image, so the text description provides more information,” Kuo said. “This intrigues me to think more about how agents can learn to take actions using multiple modalities, especially the knowledge from humans, i.e. language and symbols.”

Kuo is now a Ph.D. candidate working alongside her advisor, Principal Research Scientist Boris Katz.

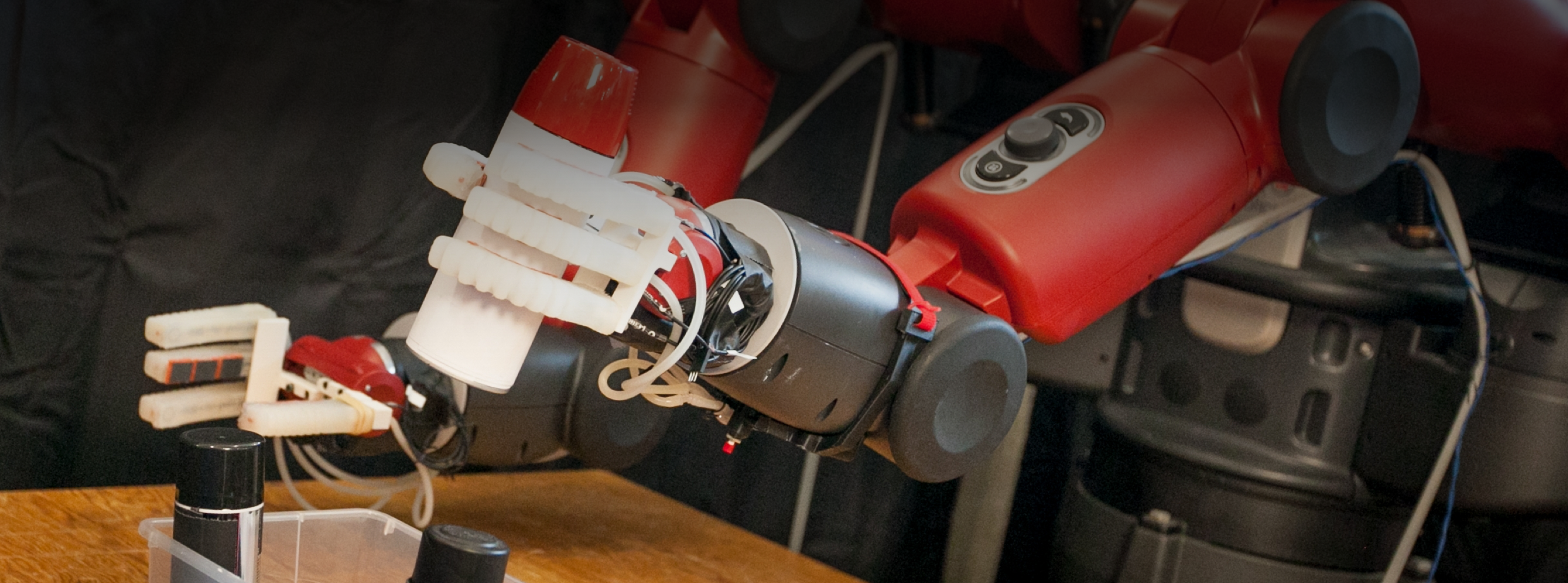

“Working with Boris’s group has been great to enrich my interdisciplinary research experience,” Kuo said. “As part of the CSAIL and CBMM communities, I had chances to work with different groups on building language interactions to human support robots, learning pursuit-evasion behaviors of birds, understanding how kids recognize and interpret others’ actions, and designing experiments to study compositional planning in rats. These experiences help me formulate my current research on robotic planning with natural language.”

Kuo said integrating planning models with language enables robots to reason about their actions that satisfy the language instructions.

“As robots become more available, no matter at home or on the road, this work will lead to more robust robots that understand what we want them to do and interact with us safely,” she said.

Kuo added that she loves applying knowledge from various areas to solve problems. She would like to take research in different areas to help each other move forward. In her current research, that is to answer how language can help robotics and how robotics can be used to study language. She said she wants to bring this integrated knowledge to real-world systems to solve problems that will make an impact.