WRITTEN BY: Audrey Woods

No one can deny that excitement around artificial intelligence (AI) and machine learning (ML) seems to have exploded in recent months. With the arrival of programs like ChatGPT, DALL-E, and other examples of generative AI at work, the public has been made aware of just how far AI technology has come and how much it stands ready to change the economy and the world.

However, one looming question in the forecasted wave of AI on the horizon is the question of efficiency and sustainability. Programs such as ChatGPT are famously resource-hungry, with the training of even a medium-sized language model releasing the equivalent amount of carbon dioxide into the atmosphere as the lifetime emissions of five cars. Considering programs as large as the recently released GTP-4, or the potential widespread adoption of autonomous cars, the estimates run much higher. While there is much to be gained in the development of AI technology—jobs made easier, economic gains, new technological possibilities, etc.—companies considering AI and ML solutions have reason to weigh the question of efficiency, specifically methods to design more efficient systems in these early stages of development.

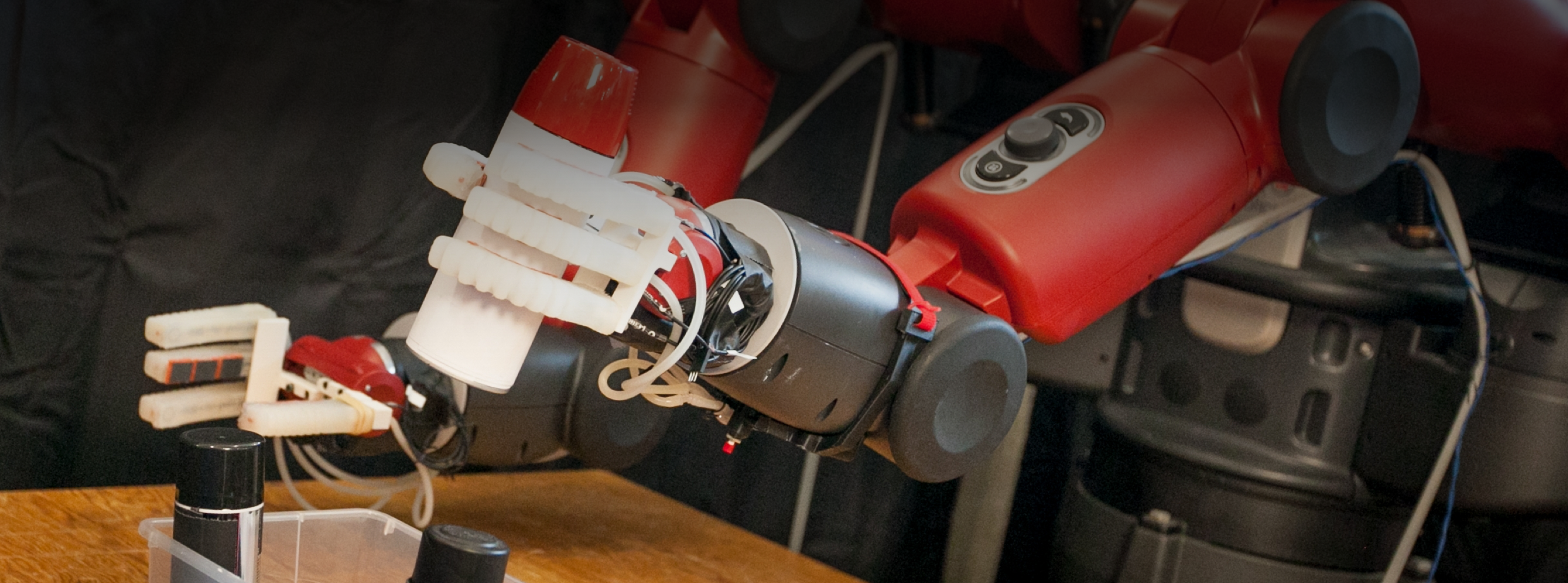

MIT Professor Vivienne Sze is working on precisely such methods, studying how to make both hardware and software more efficient and applying her findings to a wide range of fields, including autonomous navigation, healthcare, robotics, and more.

Finding Her Interest

Professor Sze was first drawn to efficiency when she was a grad student studying under Professor Anantha P. Chandrakasan, dean of the MIT School of Engineering and one of the leading world experts on low power circuits. She says, “we were really interested in applying a lot of the low power techniques that he pioneered to the application of video compression,” which was an important problem in the days before the iPhone when being able to process and view video on small, portable devices was a “really exciting concept.”

This research led her to think about both the hardware and software limitations of the problem, or what she calls the “co-design approach of designing both the algorithms and the hardware to make it more energy efficient.” Expanding upon this work, Professor Sze helped develop the video compression standard for High Efficiency Video Coding (HEVC), which is now supported on the majority of today’s smartphones, totaling over a billion devices. Soon after, she returned to MIT to join the faculty and continue research in this area.

Combining Hardware & Software Solutions

On the scale of microchips, it’s hard to imagine distance being a critical problem, but Professor Sze explains that one of the primary reasons for inefficiency on the hardware level is the cost of moving data around. Moving data from memory storage to the processing chip is expensive and consumes both time and energy, especially at the large scales needed by something like a neural network or cloud-based intelligence system. One way Professor Sze has addressed this—in collaboration with MIT Professor of the Practice Joel Emer—was to develop a chip that made the dataflow of neural networks more efficient. The Eyeriss chip, an energy-efficient deep neural network accelerator, exploits methods such as local data reuse to minimize data movement and significantly reduce energy consumption. Professor Sze has also worked on emerging approaches that involve moving computing into the memory itself (referred to as “processing in memory (PIM)”) to reduce the distance of data movement. This research led to a proposed new architecture called RAELLA: Reforming the Arithmetic for Efficient, Low-Resolution, and Low-Loss Analog PIM. Such methods could be pivotal as AI frameworks become more common and widely adopted.

Efficient solutions often depend on more than better hardware design, though that’s an important factor. Professor Sze makes it clear that one must also consider the algorithm when it comes to more efficient computing. She says that one example of how energy consumption might be incorporated into algorithm design is to consider that “deep neural networks are composed of layers… and some layers consume more energy than the others. So you might want to look at which layers consume the most amount of energy, and then try and optimize those layers to reduce the energy consumption so you're not changing the entire network, but only a subset of it, particularly the part that consumes the most amount of energy.” Often, algorithm efficiency comes back to the question of data movement and designing algorithms that, for instance, have more data reuse that can effectively use the low-cost local storage near the compute resulting in less data movement.

While concerns around sustainability and minimizing the carbon footprint of emerging AI technologies are a key aspect to Professor Sze’s research, there are other reasons efficient computing is significant to her. The ability to bring functional AI programs to edge devices such as cellphones and cars will have many real-world applications, such as preserving privacy in healthcare situations, guaranteeing safety in autonomous cars even if the network goes down, and providing fair and equal access to AI even in situations with, for example, poor network service. She explains, “untethering yourself from the communication network increases accessibility… There's a lot of promising applications for AI; we want that to be accessible to everyone.”

Implications & Future Work

As the cultural conversation around AI grows, Professor Sze believes that awareness of efficiency will grow with it. She says, “now that there's a very clear cost associated with [AI], people are looking to figure out how to cut those costs,” which means her research is timelier than ever. Indeed, her group—in collaboration with Professor Sertac Karaman—recently published a study showing that 1 billion autonomous vehicles, each driving for one hour per day, would generate about the same volume of emissions as data centers currently do. Professor Sze clarifies that this is “not to say that we should not do self-driving cars, but as we're designing these vehicles, it's important to consider the energy efficiency of the compute because we're adding compute that wasn't there before.”

Solving this, Professor Sze thinks, will take a combination of many forces. She explains there’s a balance to be struck between specialized, maximally efficient hardware and more flexible hardware that can be applied to diverse situations and hopefully last longer. Professor Sze also thinks education is a vital aspect of her work, helping both students and those in industry understand how to evaluate all the potential solutions currently on the market and understand “what are the key questions you should be asking to determine whether or not these solutions are useful for your particular application.”

Fundamentally, Professor Sze calls sustainability “one of the grand challenges of today,” explaining that while computing has helped a lot of people, we must also mitigate the environmental cost of that computing, especially with the goal of making AI more widespread and available. She concludes, “as we move forward with these very promising and exciting technologies, we also need to consider their impact on the environment. So energy efficiency is key.”