When humans began to walk erect on two legs during the course of our evolution, our hands were freed to skillfully manipulate objects and tools. From infancy, touch is crucial for exploring the world around us — so what if we could help robots explore their environment and experiment with objects through this same sense?

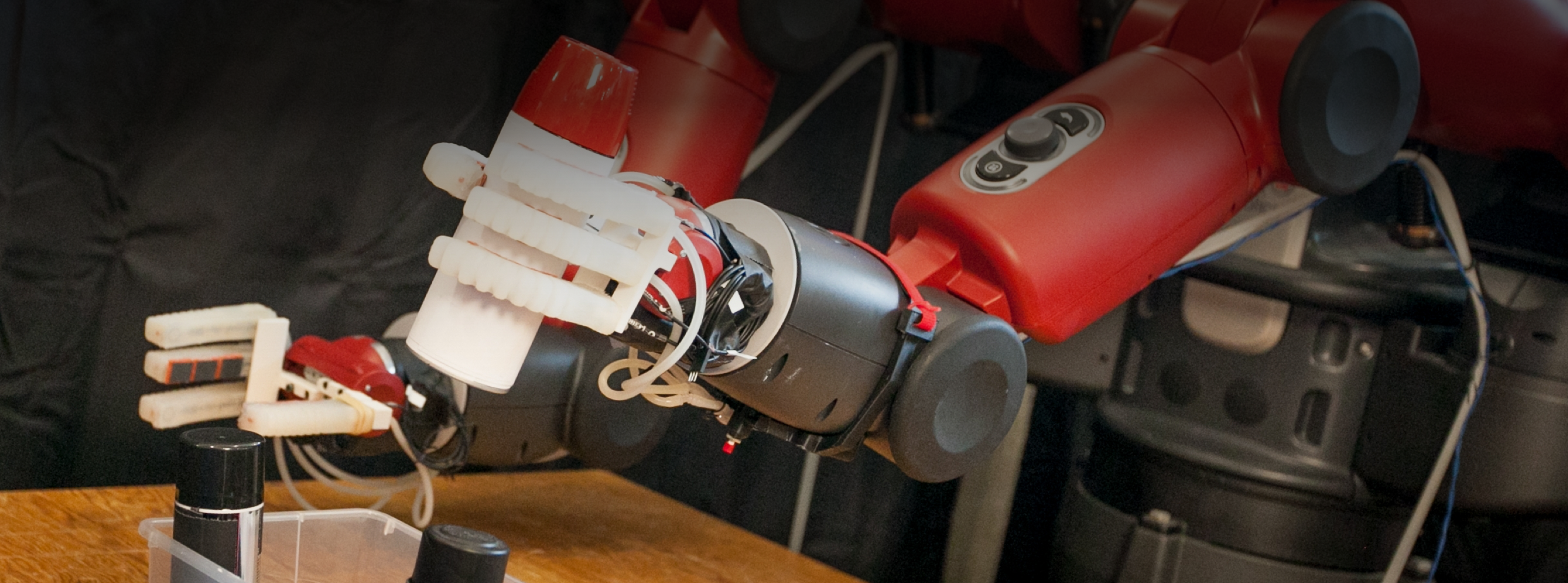

Professor Ted Adelson of MIT CSAIL is trying to give robots a sense of touch by giving them fingers that are sensitive and soft like human fingers.

“In order for robots to do good manipulation, they need good fingers,” he says. “We’re trying to make fingers that can match the capabilities of human fingers.”

Prof. Adelson was inspired to give robots a sense of touch by observing his own children. “Children have really fascinating abilities to use their fingers, to pick things up and manipulate them, even when they’re very young. I wanted to understand how my kids were extracting and using tactile data during manipulation. Since I’m a vision guy by training, I decided to build vision-based touch sensors.”

This tactile sensor technology that Prof. Adelson and his research group developed in CSAIL, called GelSight, made way for a whole family of devices using this technology. A spin-off company, GelSight Inc., is using the technology to build touch-based surface metrology devices, and is planning to build robotic fingers in the future.

Prof. Adelson’s robotic fingers contain tiny cameras that look at the surrounding soft skin. “The cameras look at the skin from the inside and can see how the skin is being deformed, and that gives us the touch signal. We can analyze that using image processing techniques, and it gives us an extremely high-resolution signal that is full of really great information,” he explains. Data coming from GelSight fingers can be used to assess the shape of a touched object as well as surface properties like texture and hardness. The fingers can also be used to measure force, shear, and slip.

Prof. Adelson says that the raw touch signal is only one step in a processing chain that includes robotic motion control and task planning. “The real test of a touch sensor is its ability to be integrated into a complete working system, allowing a robot to use its hands intelligently to accomplish chosen tasks,” he says.

It is quite a challenge to exploit the data from multiple fingers that are interacting with the world in complex ways. Advances in AI and machine learning are very important in making this feasible. Prof. Adelson says that “because of the advances in machine learning and particularly deep learning, it’s now possible to teach robots very complex relationships between world properties and the touch signal.” In many cases, Prof. Adelson says, “Problems that previously took months to solve can now be solved in a matter of days.”

If robots gain the capability to manipulate objects safely and skillfully with their fingers, they could assist us in a variety of tasks in business and at home. “If you ask what touch sensing could be good for, or what fingers could be good for, basically anything that people can do is an area where robots could to it as well,” says Prof. Adelson. “That could be in manufacturing, food preparation, logistics, health care, elder care, or domestic robots. Robots can be everywhere doing everything, and if they have good fingers and good hands, then they’re much more capable.”

Prof. Adelson enjoys working at the boundary between basic research and applied research afforded by CSAIL Alliances connections. “If you do just basic research, it’s easy to get lost in your own little world and lose track of what the real problems are. And so as soon as you start working on real-world problems that are brought to you by people in industry, it focuses your mind and opens your eyes up to things you hadn’t thought of. It’s a terrific way of balancing real-world problems and abstract thinking. CSAIL is a great place to do that and the CSAIL Alliances program is great at fostering that kind of interaction.”