Written By: Audrey Woods

While it might feel like Large Language Models (LLMs) burst onto the scene just last year with the arrival of ChatGPT, research around Natural Language Processing (NLP) and the computer architecture necessary to make it possible has been going on for quite some time. The idea of using a machine to translate languages dates back to the 1940s, when the first computers were being applied in World War II. While this was not as simple as first imagined, by 1954 there was a rudimentary system to automatically translate from Russian to English, and soon after the field of NLP began to expand into broader applications, such as information retrieval, document summarization, text-to-speech conversion, and more.

The field of NLP is now a thriving subset of artificial intelligence research which aims to give computers the ability to understand text and spoken word just like humans do. While we’ve all interacted with NLP systems—from the voice-to-text feature on our cellphones to customer service chatbots—recent innovation has enabled the life-like interactive abilities of programs like ChatGPT, and research at institutions such as MIT CSAIL hints at even more exciting advances to come.

Working at the cutting edge of this area, CSAIL Assistant Professor Yoon Kim believes there will be “very significant commercial and economic impact of these technologies across various sectors.”

Finding His Interest

As Professor Kim tells it, he “accidentally stumbled” upon the field of NLP research. Having studied math and economics as an undergraduate, he began his career in finance. However, realizing the limitations of his knowledge and wanting to learn more, he started a part-time master’s degree in statistics at Columbia. While there, he took a machine learning course and was “super intrigued by it.” This led to another part-time master’s degree in machine learning and data science at NYU where—again by chance—he took a course called natural language processing.

“I had no idea what that was,” Professor Kim says, explaining that it was just an elective to fulfill a requirement. But the timing of him taking this course just so happened to line up with the application in NLP research of both deep learning methods and word2vec, an NLP technique for generating word embeddings which Professor Kim says “blew me away.” He admits, “that’s what got me into this field and made me want to quit work and go back to school full time to do research in this.”

Professor Kim says, “I’ve been in the field ever since.”

The World of Large Language Models

According to Professor Kim, NLP research has seen some major changes in the last five years that have significant implications for how these programs might be used in the future. The biggest one, he explains, is the shift from creating specific models to solve particular tasks toward general-purpose models that can adapt to any task involving language. “For example,” he says, “in the past, folks were building question answering specific systems. The pipeline now is you take this pretrained language model and then you adapt it to become a question answering system.”

While terms like neural networks, LLMs, and transformers might seem hazy to non-experts, Kim explains that “basically, neural networks are functions that take as input stuff. Stuff can be language in the context of language models [or] pixels if you’re doing image classification… Then this function predicts stuff.” A transformer is a particular type of neural network, so an LLM is typically a transformer neural network that is trained to predict the next word given a set of previous words.

“Philosophically,” Professor Kim says, “these models are just auto-complete systems,” not all that different from Google Search. The difference is the size of the neural network architecture, which is much larger and more complex. To Professor Kim, this enormous size, and the resulting complexity, links to the question of whether or not LLMs qualify as intelligence.

“One useful thing to think about is there’s not necessarily a correlation between the simplicity of an objective that a system is optimizing versus the complexity that emerges.” Comparing it to evolution, he says that gene propagation is a theoretically simple objective, but in solving this optimization problem, incredible complexity emerges. Similarly, a model trained to predict the next word, especially one trained on billions or even trillions of tokens, ends up producing impressive results which can, in some sense, be interpreted as a kind of intelligence.

What Comes Next?

While the media narrative around LLMs can stray into the dramatic, the idea of these programs somehow becoming all-powerful to the extent of posing a threat to humanity seems “very far-fetched” to Professor Kim, who would much prefer that we as a society focus on the real risks and benefits of these technologies. There are risks, of course, and he says, “as with all applications, there should be careful vetting [and] scoping.” But he offers the perspective that, while making these technologies available has ethical implications, there are also large ethical implications of not making them available. Even though there is still much work to be done in making these systems safe enough for deployment in many domains, LLMs offer a way to “level the playing field” in a great many disciplines, such as providing legal counsel to those who might not be able to afford it, personalized tutoring to students across various subjects, and programming assistance to those without programming backgrounds. In the same way that the search engine improved productivity and opportunity for millions around the globe, Professor Kim believes that LLMs have much to offer the general population and, because of that, there’s a strong argument for “democratizing these technologies and making them accessible to as many people as possible.”

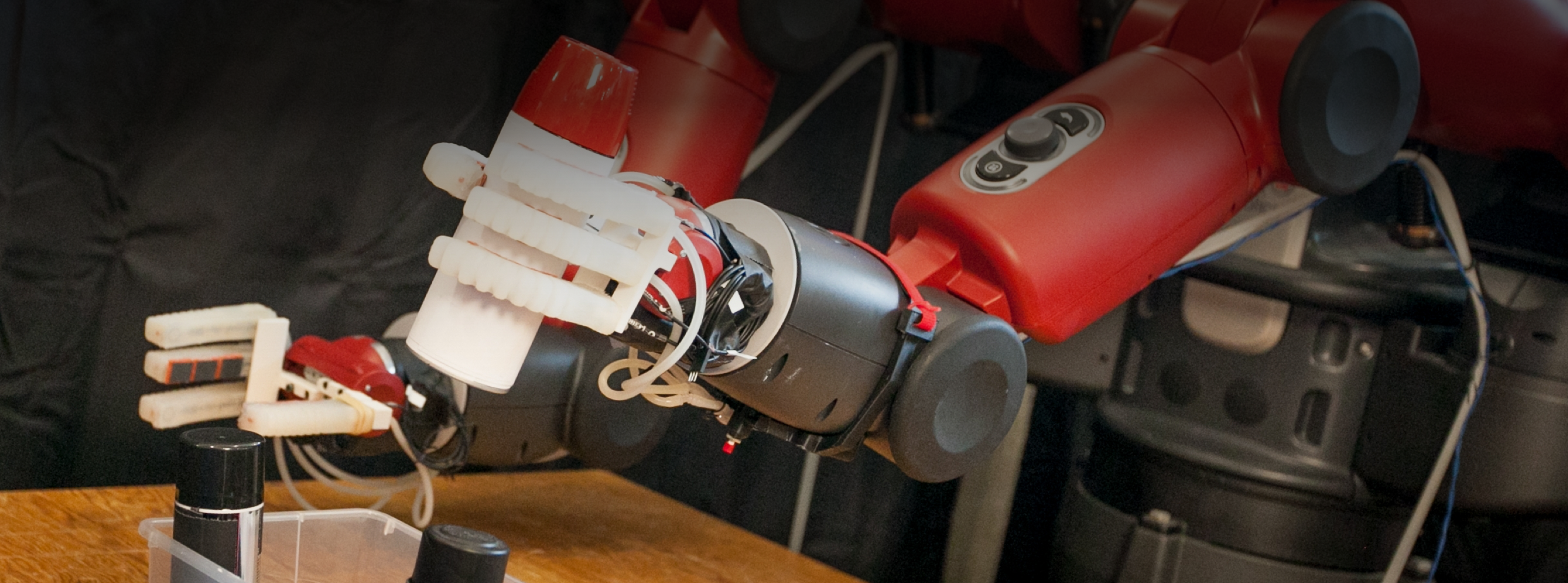

What does that mean for Professor Kim? In part, that means trying to make the programs cheaper—ChatGPT is infamously expensive to run—which requires fresh techniques and methods for making the models more efficient, a current focus of Professor Kim’s research. He says, “there’s also a lot of vibrant work around training multimodal language models so they can naturally incorporate images,” expanding what these neural networks are capable of. Professor Kim can even see this being generalized to embodied environments, where a robot might be absorbing information about an environment, combine that information with instructions given by a human, and then, instead of predicting text, predict the next action. He thinks “this could result in more useful systems that are embodied and have a more grounded knowledge of the world.”

The enormous societal response to LLMs such as ChatGPT, Bard, Bing AI, and HuggingChat showed everyone—Professor Kim included—that there is a demand for what these programs offer. Whether it’s an assistant system that can interact with you, an AI resource that can offer specialized expert advice, or some other application, imaginations around the world have been activated to bring this exciting research to the market. And, with his efforts to develop more efficient training methods through model reuse, trialing new types of neural network architecture, and generally understanding the capabilities and limitations of LLMs, Professor Kim will continue to offer key insight in this dynamic and impactful area.